Click to zoom

Click to zoom

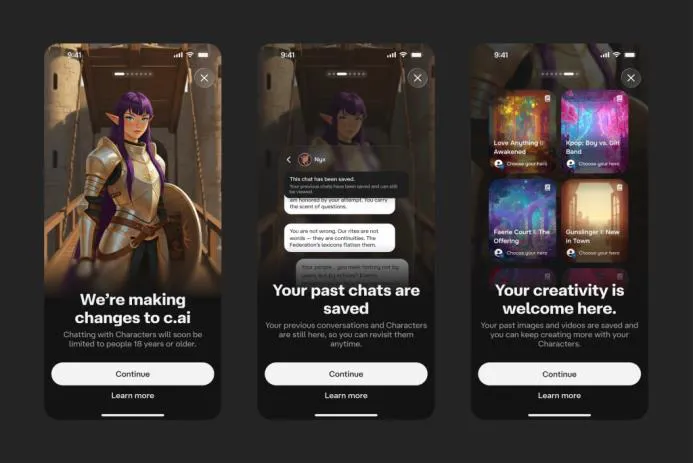

What’s changing at Character.AI?

Character.AI just rolled out a feature called Stories — basically an interactive-fiction format that lets people build guided adventures with familiar characters. The change comes with a major policy shift: users under 18 can no longer use Character.AI’s open-ended chatbots. Instead, Stories will be the safety-first option for teens who still want to play and imagine with characters, but without unrestricted conversational access.

Why did Character.AI make this change?

There are a few forces at work here. Open-ended AI companions have drawn criticism for mental-health risks because they can sustain long, intense—and sometimes harmful—interactions. There have even been lawsuits alleging platforms contributed to tragedies, and regulators aren’t idle. California moved first with rules around AI companions (read more), and federal proposals — including bills from Senators Josh Hawley and Richard Blumenthal — would tighten restrictions on AI companions for minors (bill details).

Character.AI’s CEO Karandeep Anand told TechCrunch the team sees guided fiction as a safer, more controllable product for under-18s than open-ended chat — and honestly, in the current climate, that feels like the pragmatic move.

How do Stories differ from chatbots?

- Guided narrative vs. open-ended conversation: Stories are structured experiences with branching choices rather than free-form back-and-forth. Think choose-your-own-adventure, not a nightly confidant.

- No unsolicited messages: Stories don’t initiate contact or push notifications in the way some chat systems can; they’re user-driven.

- Lower risk of harmful personalization: Interactive fiction narrows the bot’s ability to mirror and amplify a teen’s vulnerable state — less chance of reinforcing negative loops.

An example use case

Picture a teen obsessed with a fantasy character. Previously, that meant late-night chats where the bot kept replying, the conversation deepened, and boundaries blurred. With Stories, the teen opens a quest: pick a path, make decisions, see the story react. From my experience with guided narrative AI, that kind of engagement can still be emotionally satisfying — without the unpredictable intensification that comes from one-to-one, always-on companionship.

Will Stories satisfy teens who relied on chatbots?

On the Character.AI subreddit, reactions are all over the map. Some teens are upset; others admit it might be healthier. One underage user wrote, “I’m so mad about the ban but also so happy because now I can do other things and my addiction might be over finally.” Another said, “Disappointing but rightfully so — people my age get addicted to this.” That push-and-pull captures the tension: teens want connection and responsiveness, regulators want to reduce risk.

Stories aren’t a perfect social replacement. They won’t replicate the feeling of a peer-like companion. But they do provide a creative outlet with clearer guardrails — a trade-off many platforms will have to make as the debate about age-gated AI features and digital well-being for teens heats up.

Industry context and regulatory headwinds

This pivot isn’t in isolation. Across the industry, companies are reassessing open-ended chatbot safety amid investigative reporting, lawsuits, and new laws. California’s rules on AI companions and proposed federal legislation are pushing platforms to rethink how they serve minors (TechCrunch coverage).

What this means for parents, educators, and developers

- Parents: Expect more age gates and safer alternatives. Use the change as a conversation starter about healthy screen habits and why certain features are restricted. Yes, it might feel frustrating — but it’s also an opening to set boundaries.

- Educators: Guided interactive fiction can be repurposed for literacy, role-play, and social-emotional learning (SEL). Teachers could create controlled scenarios that practice empathy or problem-solving — it’s role-play educational AI done thoughtfully.

- Developers: This is a clear signal: design with adolescent development and moderation in mind. Add friction where necessary, build consent flows, and bake in human moderation tools. The market will reward safety-first design (and regulators will demand it).

Expert perspective and sources

Researchers studying AI and mental health warn that always-on conversational agents can foster dependency by offering polished, judgment-free responses — which is risky for vulnerable users. For deeper reading, see the TechCrunch investigative report and coverage of interactive fiction’s rise in The Guardian.

One hypothetical — and why it matters

Imagine a 16-year-old who uses nightly chats to process anxiety. An open-ended chatbot mirrors their fears and, unintentionally, strengthens worry loops. Move that teen into a Story — say, a guided narrative where they role-play problem-solving within a fantasy quest — and you get emotional engagement without feeding the same cycle. It’s not a clinical fix, but it lowers risk and opens doors for therapeutic or educational partners to step in.

Key takeaways

- Character.AI introduced Stories as a guided, kid-safe alternative to open chat for under-18 users (official announcement).

- Regulation and lawsuits are accelerating platform changes; California’s rules and pending federal proposals are reshaping the landscape.

- Stories reduce certain harms by limiting unsolicited interactions and curbing continuous, unsupervised companionship features.

- Not a full replacement — teens who seek social connection may find Stories less satisfying, but the format is a pragmatic, safety-first compromise.

Further reading and resources

- Character.AI blog: Introducing Stories

- TechCrunch investigative report

- California regulation coverage

- The Guardian: interactive fiction surge

In my experience, design that balances creative engagement with clear safety boundaries is where kid-focused AI should head — practical, protective, and still fun. Fingers crossed other apps follow suit.

Thanks for reading!

If you found this article helpful, share it with others